Generate API tests with Katalon Studio's AI (beta)

This document introduces AI-powered API test generation and report viewing. By importing an OpenAPI file, you can have Katalon's AI agent automatically generate and execute tests, then view a results report without any test artifacts being created or stored in the project.

API test generation and report viewing with AI

By uploading an OpenAPI specification file, Katalon’s AI agent automatically generates tests, executes them immediately, and provides a results report—without creating or storing any test artifacts in your project.

-

Import your OpenAPI spec file.

Import your OpenAPI document to create an API Collection folder in the Object Repository.

This feature currently supports header-based authorization only. If your project uses other authentication types (NTLM, Digest, etc.), those mechanisms will not be included in the generate commands.

-

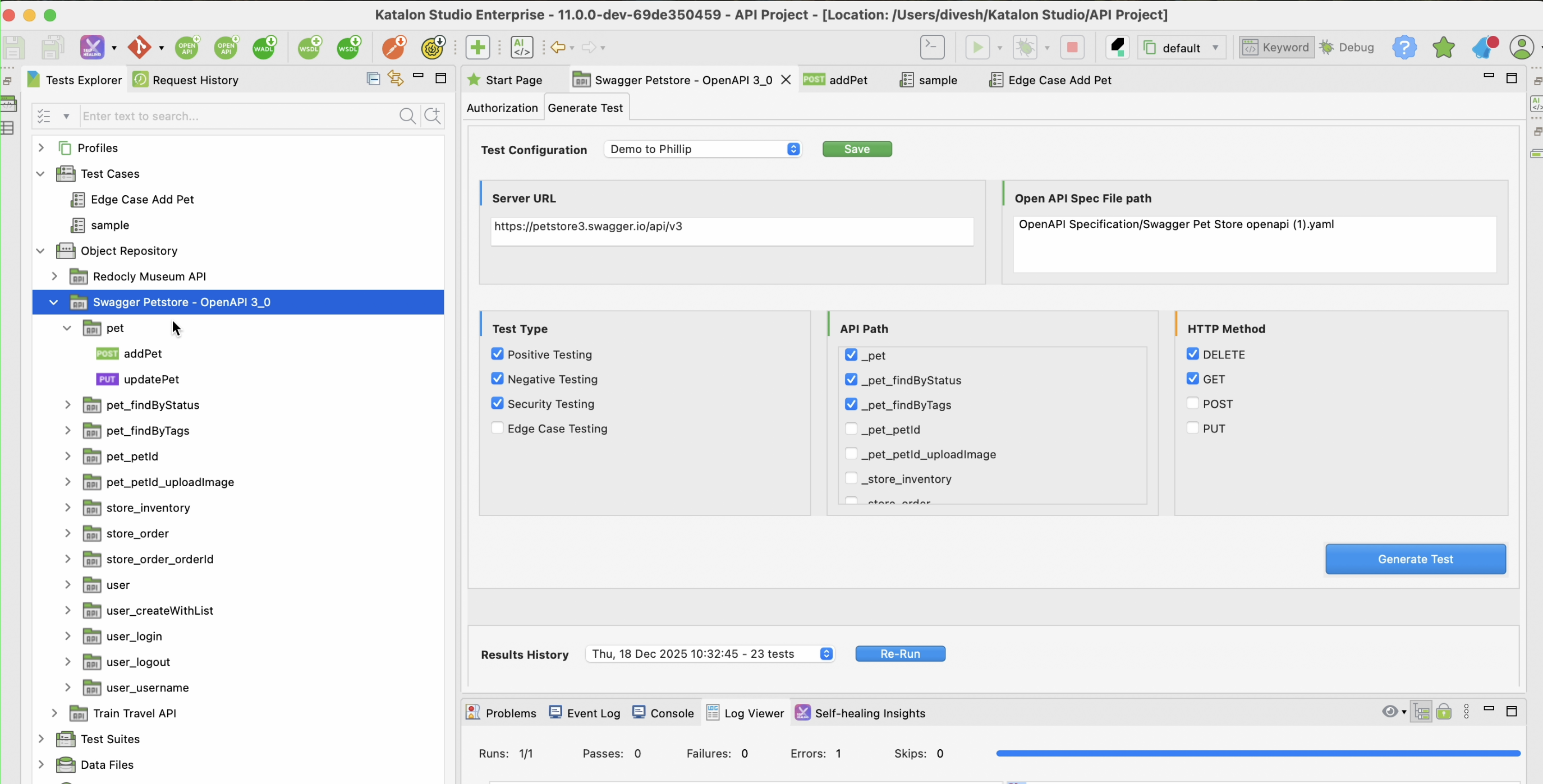

Double-click on this API collection folder, and select Generate Test tab.

-

Select test types (positive, negative, edge and security testing), API paths, and HTTP methods to test. The options are retrieved from your specification file. If you don't select any API path before generating, KS automatically use all paths to generate the tests.

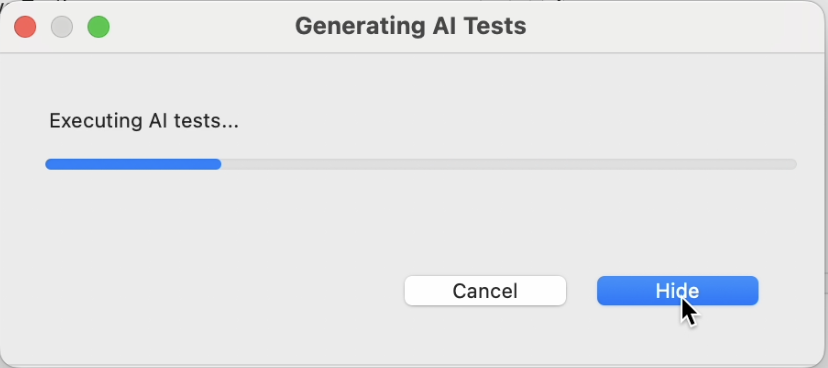

- Click Generate Test. Katalon Studio will generate tests using AI for the OpenAPI Spec file, and then execute the tests right after. You can see the progress bar, and click Hide to let KS work in the background.

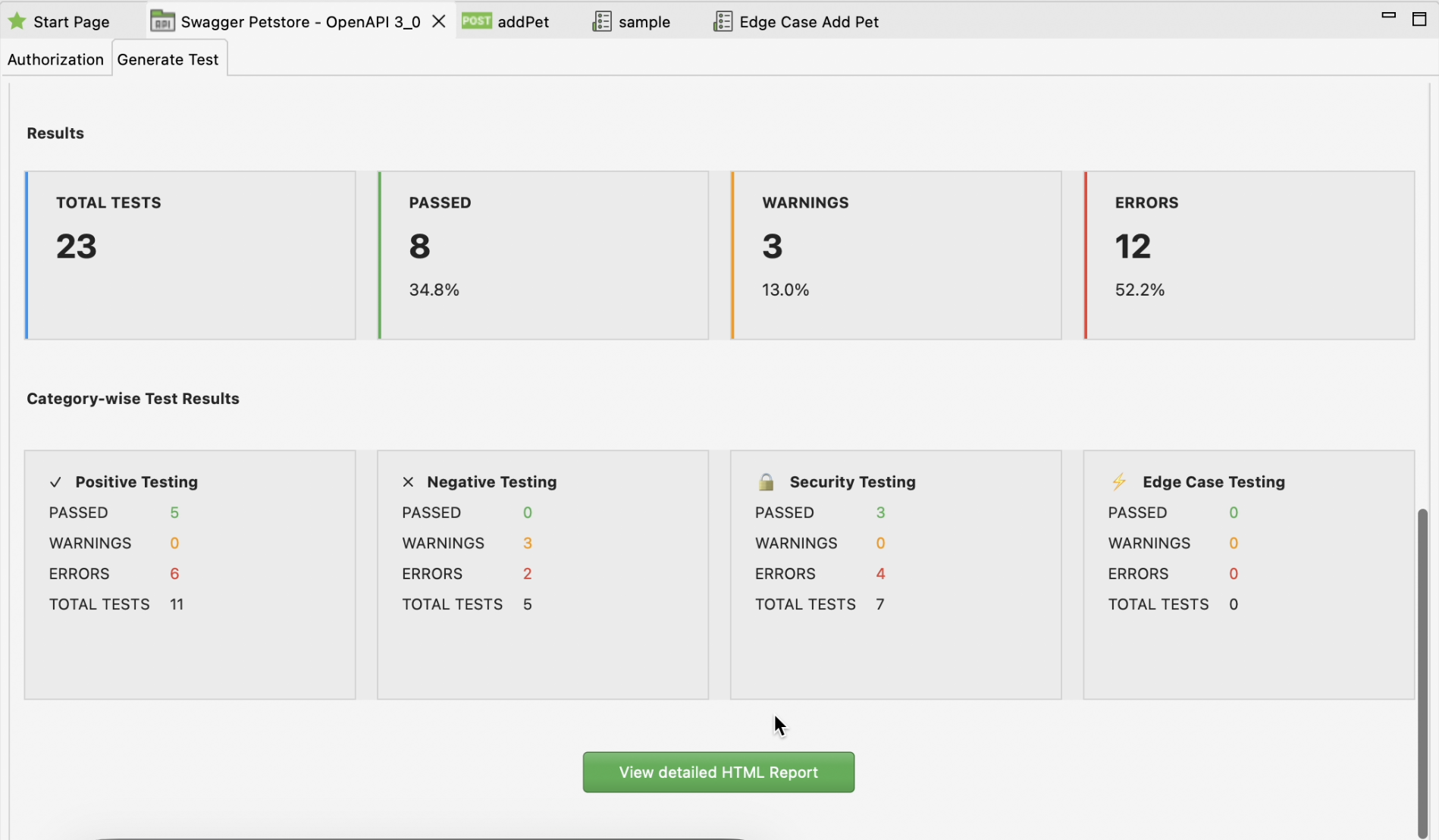

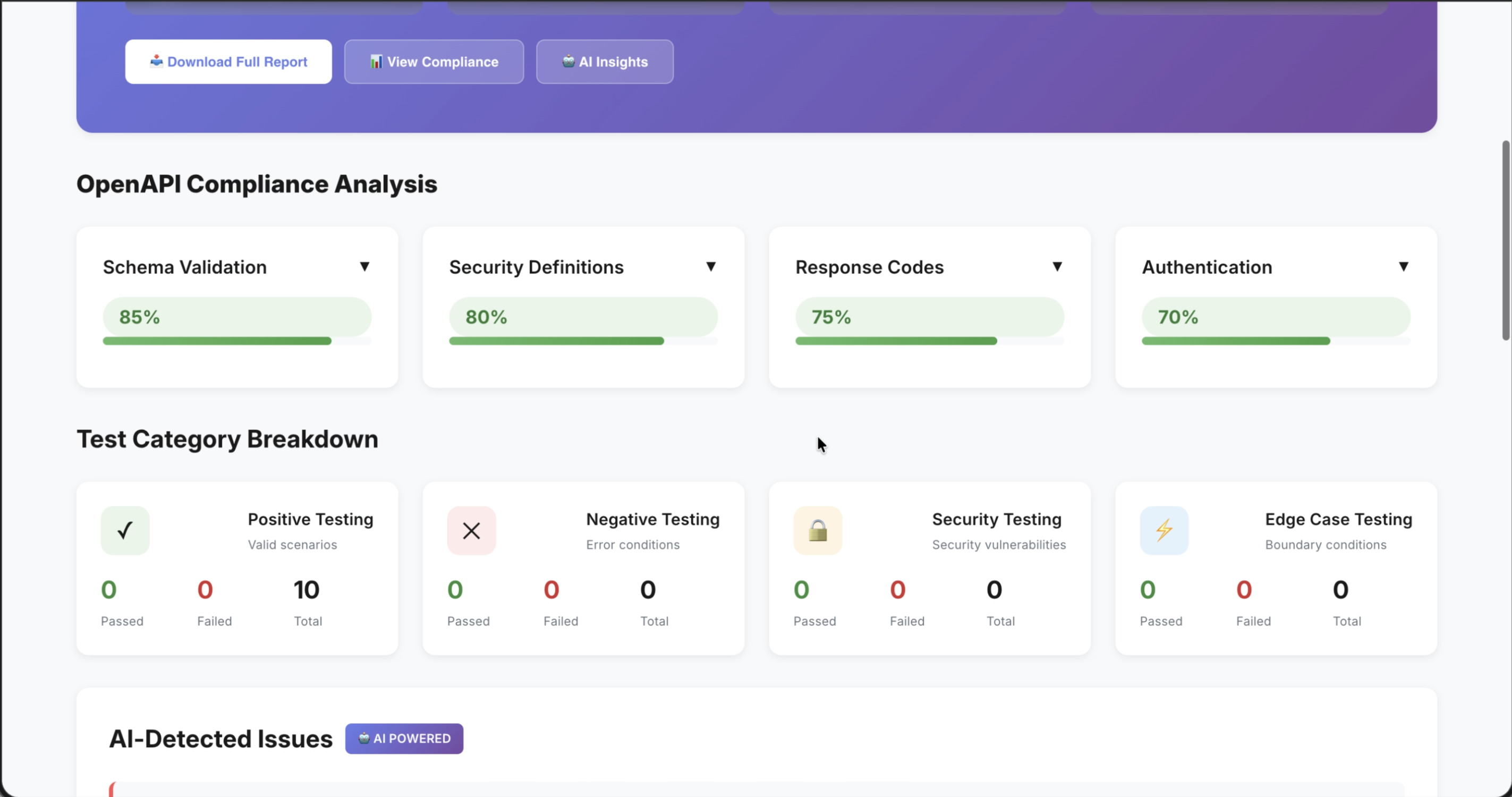

- Once done, the report summary pops up for your viewing:

You can then also view the full HTML report, with more details on scores, compliance analysis, errors, logs...

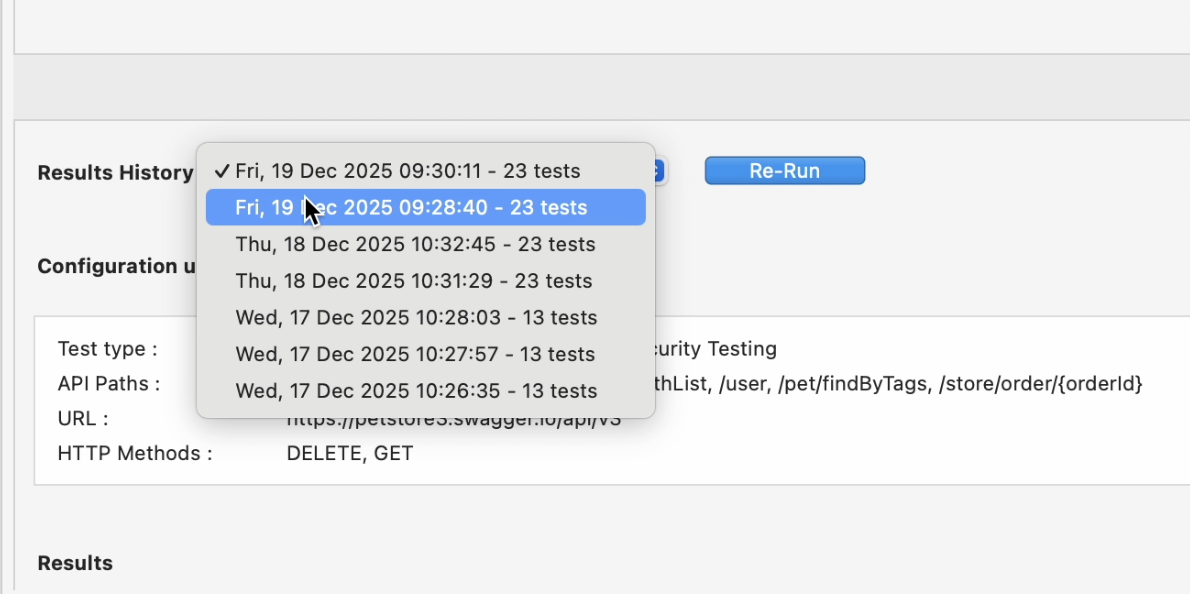

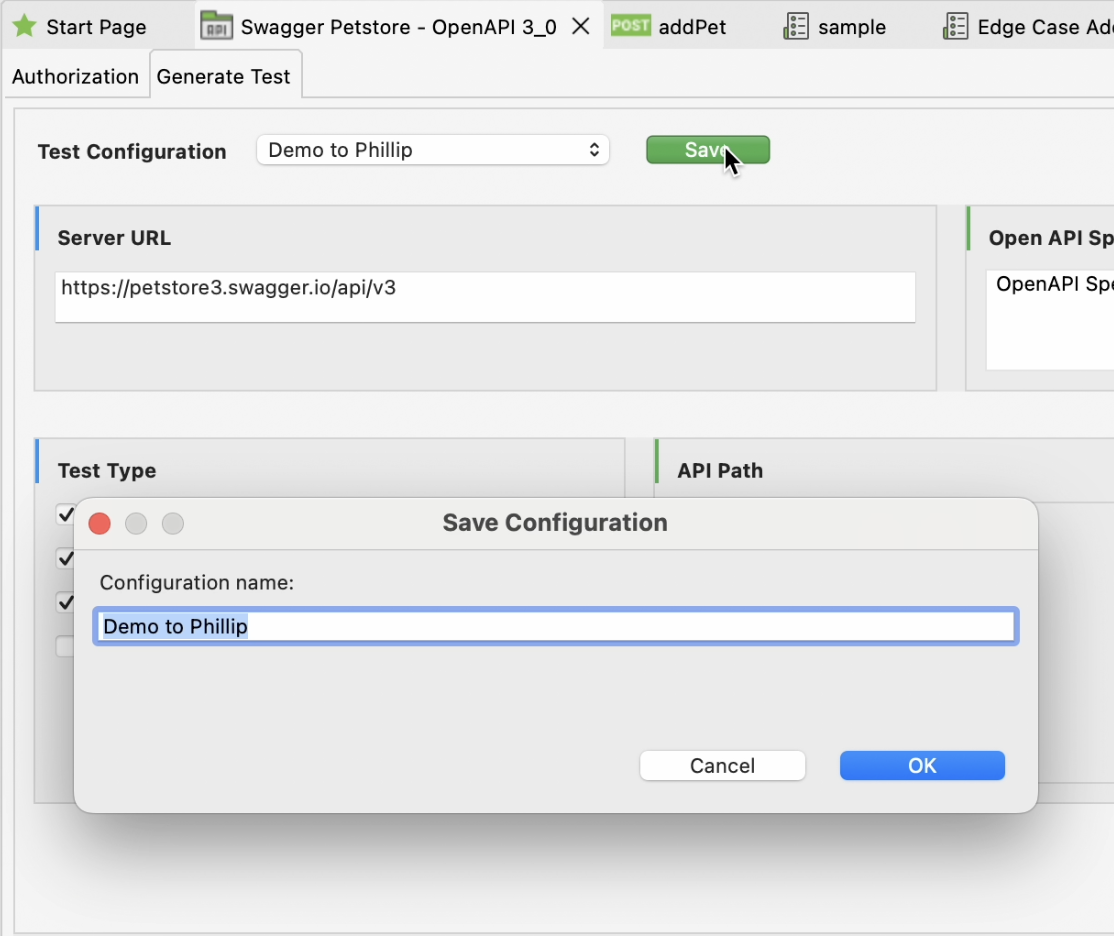

After reviewing everything, you can save the test generation configuration to rerun with same configurations later:

This is useful for validating fixes after API updates and comparing results across multiple iterations without reconfiguring the test setup each time.